Running a Multimodal LLM locally with Ollama and LLaVA

Last Update: Jun 7, 2024

AI changed software development. This is how the pros use it.

Written for working developers, Coding with AI goes beyond hype to show how AI fits into real production workflows. Learn how to integrate AI into Python projects, avoid hallucinations, refactor safely, generate tests and docs, and reclaim hours of development time—using techniques tested in real-world projects.

Introduction

Multimodal AI is changing how we interact with large language models. In the beginning we typed in text, and got a response. Now we can upload multiple types of files to an LLM and have it parsed. Blending natural language processing and computer vision, these models can interpret text, analyze images, and make recomendations.

Until recently multimodal AI was limited to hosted solutions, the “big name” tools. Services like ChatGPT, Claude, Bard, and so many others. Multimodal AI is now available to run on your local machine, thanks to the hard work of folks at the Ollama project and the LLaVA: Large Language and Vision Assistant project. Today, we’re going to dig into it. In this article I’ll show you these tools in action, and show you how to run them yourself in minutes.

Why Multimodal AI?

Picture this. A culinary artist, Ashley wants to recreate authentic dishes from various countries and cultures. She faces some big challenges. How can these be recreated accurately? She tries deciphering traditional recipes, some written in foreign languages and confusing to recreate.

She finds an old recipe book in a small bookstore filled with traditional recipes from around the world. It’s full of excellent dishes that look fun to make. But how?

She takes a picture of the recipes and photographs in the book and uploads them to an LLM. The system recognizes the text, evaluates the images, and provides step-by-step instructions on how to prepare the dishes.

Awesome right?

Let’s say you’re a photographer. Multimodal AI can read text and evaluate images beyond just providing descriptions. It can interpret emotions on people’s faces and suggest improvements for future shots based on the compositional rules of photography. The possibilities are endless.

This is just a small glimpse into how you can use multimodal AI. It’s useful and fun. It’s recently become available with large hosted services, but now you can run it on your own computer. Before we dig into the features of this model, here’s how you can set it up.

How You Can Run Multimodal AI on Your Computer

I’ll show you some great examples, but first, here is how you can run it on your computer. I love running LLMs locally. You don’t have to pay monthly fees; you can tweak, experiment, and learn about large language models. I’ve spent a lot of time with Ollama, as it’s a nifty solution for this.

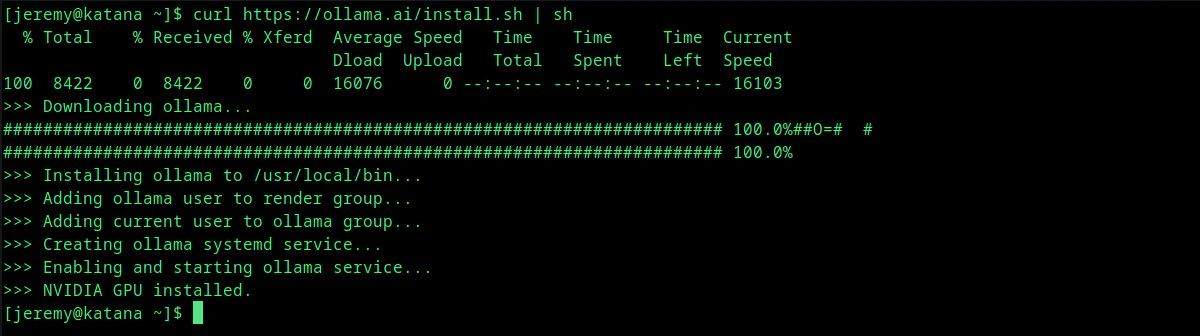

So let’s do it. To install Ollama with LLaVA:

Linux

In Linux, run this command:

curl https://ollama.ai/install.sh | sh

It will pull down what you need and install it.

Yep, that’s it.

Mac OSX

On a Mac, download and extract this file:

https://ollama.ai/download/Ollama-darwin.zip

Windows

In Windows, you can use WSL to run Ollama and it runs just like it does in Linux.

Loading the Model

Once it is installed, you can simply run the following:

ollama run llava

This loads up the LLaVA 1.5-7b model.

You’ll see a screen like this:

And you’re ready to go.

How to Use it

If you’re new to this, don’t let the empty prompt scare you. It’s a chat interface!

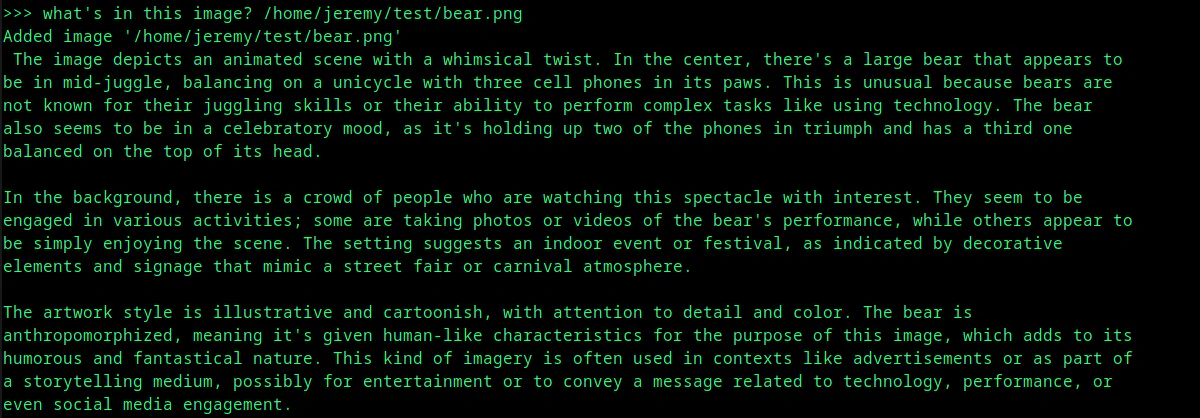

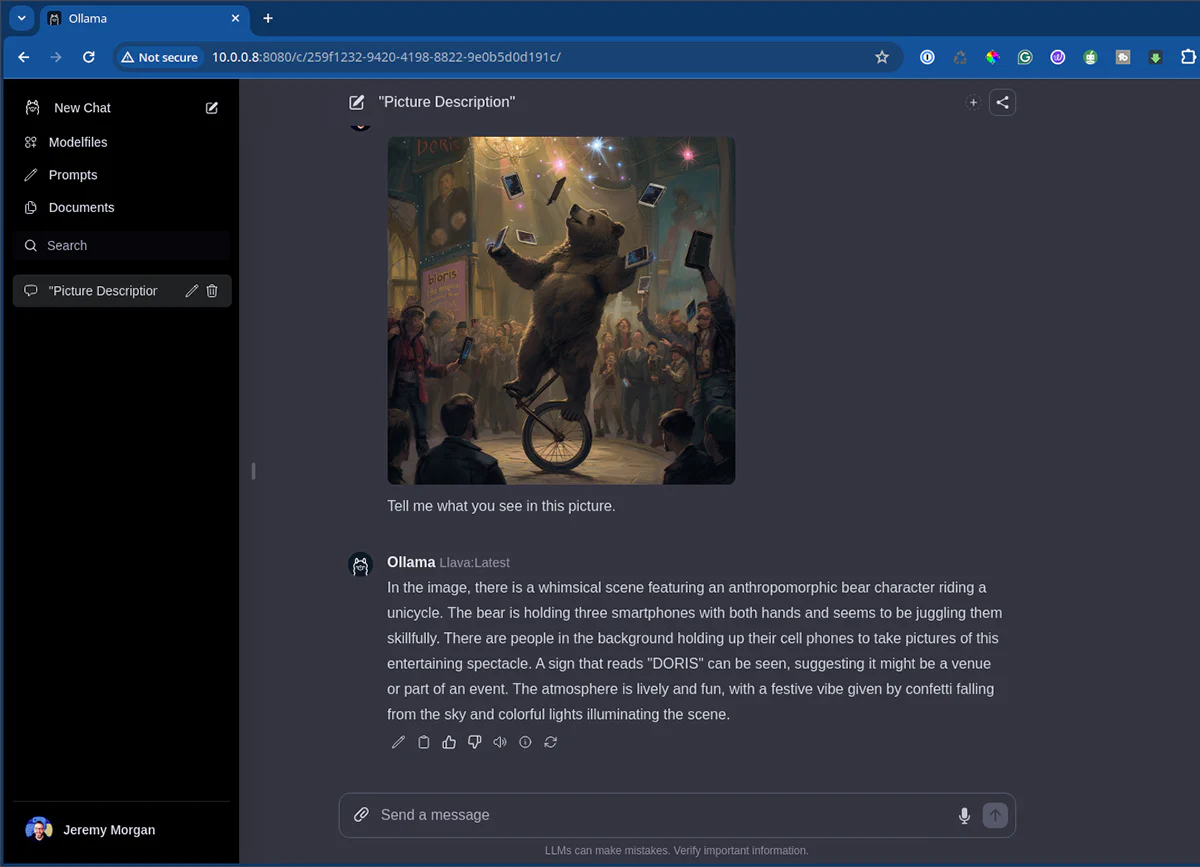

I’m starting with this image:

This image is pretty busy. Let’s see if LLaVA can figure out what’s going on with it.

I’ll send the prompt:

what's in this image? /home/jeremy/test/bear.png

and it quickly spits back a description:

It’s that easy! It’s pretty impressive stuff. Let’s see what it can do.

Putting LLaVA to the Test

Sticking with the theme of absurd images to describe, here’s another:

LLaVA Description Result:

In the image, there is a scene that appears to be a staged photograph or an illustration meant for humorous effect. A large cat sits in the foreground of the photo, looking attentively towards the background where a group of people are gathered around an art exhibit. The cat has a surprised or contemplative expression, as if it is taking in the scene around it. The art exhibit features a painting with a group of people depicted within it, and the contrast between the two layers of the image is striking.

The background is filled with spectators who are observing the artwork, while the cat adds an unexpected and amusing element to the composition. This juxtaposition of a domestic animal in an intellectual setting creates a playful and whimsical atmosphere. The painting in the exhibit depicts people from what appears to be a historical period, dressed in clothing styles characteristic of that era.

The overall impression is one of an artistic or social commentary on the relationship between animals and humans, perhaps highlighting how animals are often included as part of human society and culture. It’s a blend of reality with a touch of fantasy, designed to evoke amusement or provoke thought.

Or how about this image:

LLaVA Description Result:

The image you’ve provided appears to be a creative or humorous representation rather than an actual photograph. It features the iconic figure of Abraham Lincoln, known as “Honest Abe,” depicted in his famous black and white portrait, sitting at a dining table with a modern twist—he is holding a cell phone and is in the middle of enjoying what looks like a fast-food meal.

The setting includes an American flag in the background and a “MADBARGE” sign, which is a play on words with “MADBARGE” being a misspelling of “Madison Bar & Grill,” a popular restaurant chain in the United States. The combination of historical iconography with modern-day food culture creates a striking contrast that is meant to be humorous or thought-provoking, perhaps commenting on how far American cuisine has come from the era in which Lincoln lived.

To say this is impressive is an understatement. This is all running from my mid-range laptop!!

It’s pretty impressive what it recognizes:

This is a digital painting of an Americana-style scene. It depicts a classic car parked on the side of a road with vintage signs, including one that reads “Welcome to Portland.” The architecture suggests a small town or city street, and in the background, there’s a snow-capped mountain, which could be Mount Hood or a similar peak, adding to the Pacific Northwest atmosphere. The artwork is rich in detail, capturing the essence of a nostalgic American road trip.

You can use this for classification purposes also:

The image shows a 2005 Ford Mustang parked outside. It is a sports car model characterized by its sleek design, large intake vents in the hood, and aerodynamic body lines. The vehicle appears to be well-maintained, with clean and shiny finishes on both the exterior paint and the chrome accents that adorn the front grille, hood vents, side mirror casings, and door handles.

The car features a hardtop convertible roof system, offering the choice between an enclosed cabin for protection from the elements or the option to lower the top for open-air driving experiences. The vehicle is equipped with a performance exhaust system, which is evident by the dual exhaust pipes located at the rear of the car.

The wheels on the Mustang are custom, likely designed for improved aesthetics and aerodynamics. They are finished in a chrome-like material that matches the other metallic parts on the vehicle. The Mustang’s body lines showcase a modern design with subtle contours that add to its sporty appeal.

In terms of the car’s overall condition, it appears to be well-cared for and maintained over time. The paint is shiny and free from any noticeable scratches or blemishes. The vehicle also features a number of customizations that are likely intended to enhance its visual appeal or performance capabilities.

In summary, the image showcases a 2005 Ford Mustang with various custom features such as unique wheels and exhaust system. It is presented in an urban setting near a building with a garage door, suggesting it might be used for storage or display purposes. The vehicle’s condition indicates that it has been well-cared for and maintained over time.

Ok, so it’s not a convertible. And I have no idea how it guessed what the pipes look like in the back, but it’s accurate! There are many cool use cases for this kind of thing.

Reading Text

A super helpful application for this can be reading text.

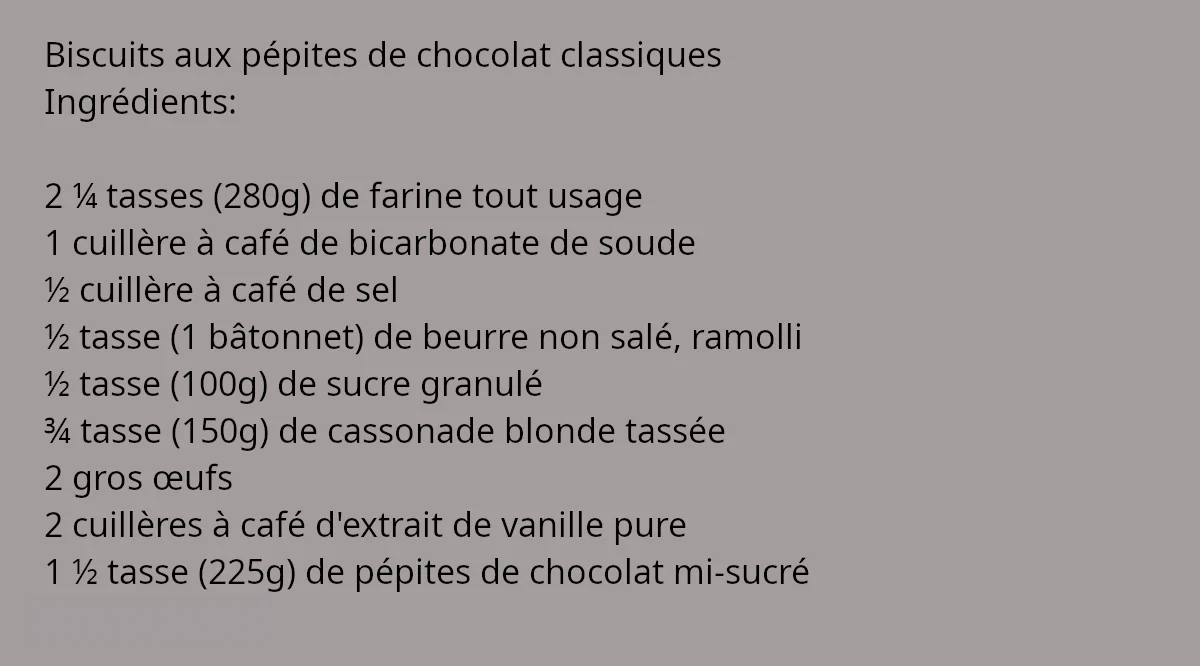

Remember that recipe example I mentioned earlier?

The image contains a list in French, which seems to be a shopping list or ingredients for cooking. Here is the

translation into English:

- 100 grams of chocolate chips

- 2 eggs

- 300 grams of sugar

- 200 grams of flour

- 1 teaspoon of baking powder

- 1/2 cup of coffee

- 2/3 cup of milk

- 1 cup of melted butter

- 1/2 teaspoon of salt

- 1/4 cup of cocoa powder

- 1/2 cup of white flour

- 1/2 cup of dark chocolate chips

- 1 teaspoon of vanilla extract

- 1/2 cup of chocolate syrup

The list is written in French and includes a variety of items commonly used in baking, such as chocolate chips,

eggs, sugar, flour, salt, cocoa powder, white flour, dark chocolate chips, and various liquids. The items are

listed in French with their English translations provided next to them for reference.

Yep. You can do this on your machine. We’re moving in the right direction.

Hardware Considerations

Most of the time, I run these models on machines with fast GPUs. I run Ollama frequently on my laptop, which has an RTX 4060. I have a big 4090 in my desktop machine, and they’re screaming fast.

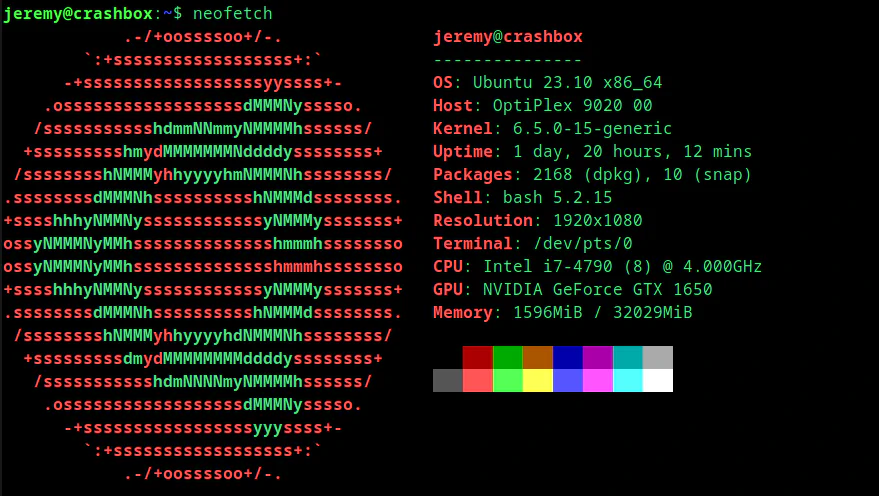

But you don’t need big hardware. I run an Ollama “server” on an old Dell Optiplex with a low-end card:

It’s not screaming fast, and I can’t run giant models on it, but it gets the job done. And as a special mention, I use the Ollama Web UI with this machine, which makes working with large language models easy and convenient:

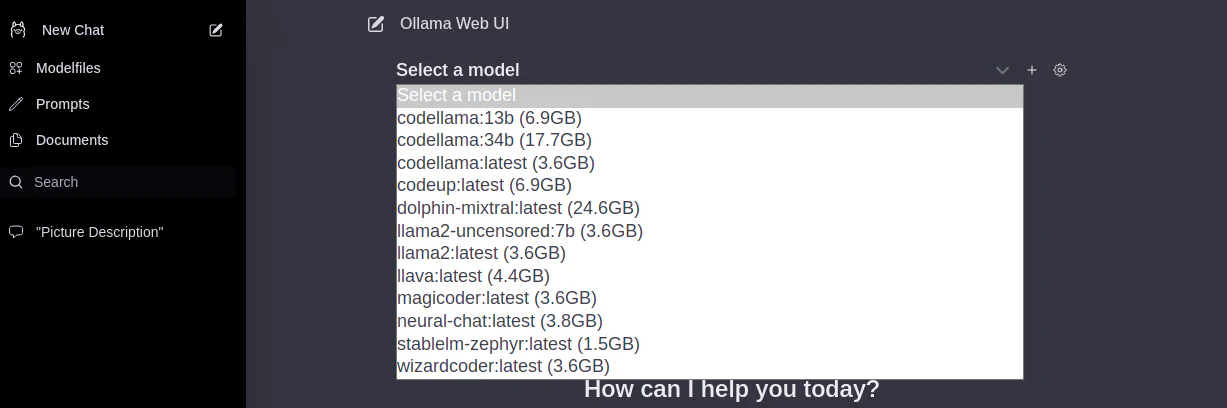

You can also easily download and swap models as much as your heart desires:

Check it out. You must have Ollama up and running, and you can get the full instructions from the GitHub page.

Summary

Running Local language models on your machine is fun and educational. Not only do you not have to pay monthly fees for a service, but you can also experiment, learn, and develop your own AI systems on your desktop. It’s a lot of fun.

If you have any questions or comments, feel free to reach out on Twitter or LinkedIn. I love talking about this stuff.

Skip the hype. The newsletter that keeps you in the know.

AI news curated for engineers. The AI New Hotness Newsletter is what you need.

Zero fluff. Just the research, tools, and infra updates that actually affect your production stack.

Stay up to date on AI for developers - Subscribe on LinkedIn